For this newsletter I’d intended to write about Discover, collecting everything I know about maximising visibility in Google’s personalised recommendation feed. As part of that process I reached out to Lily Ray, who is one of the industry’s true experts on all things Discover.

Turns out she had an article in the works about Discover, which has now been published on Moz with the title How to Drive Traffic in Google Discover: The Ultimate Guide.

And yes, it’s an ultimate guide. It covers everything I wanted to cover and loads more. So for now I don’t need to write about Discover, as Lily’s piece is quite simply the definitive piece on that topic.

So instead I moved on to the next topic on my list, which is an area I get many questions about whenever I deliver training for clients: user-generated content, and specifically comments underneath articles.

User-generated content (UGC for short) has been a staple of the internet since the beginning. From bulletin boards to guestbooks and comments, the internet and the web have always allowed users to post and participate in various ways.

On the modern web, UGC is everywhere: comments, Q&As, forums and bulletin boards, guest articles, wikis, reviews – UGC comes in an endless variety of flavours.

Social media platforms are pretty much entirely UGC. Sites like Reddit and Quora are built on the contributions of their audience, with (almost) all their content generated by their users. Every page on Wikipedia is a combined effort from thousands of volunteers.

Essentially, UGC is open source for the web’s content.

UGC can serve as a deliberate tactic to generate vast amounts of content – which can rank in Google and drive traffic to the website – with relatively little effort. Instead of having to pay journalists and writers, you can just outsource the production of content to your audience.

The biggest risk is the potential abuse of UGC for unsavoury purposes. Oversight is required, which means you still need to invest in moderation resources to safeguard the quality of your site’s UGC.

For the purpose of this newsletter, I’ll focus on the most common form of user-generated content on publisher sites: reader comments underneath articles.

The first question I get asked most often is ‘can Google see comments’? And the answer is, it depends.

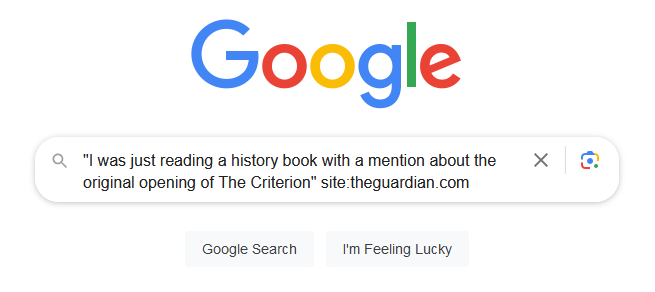

You can easily test if Google can ‘see’ comments on your site. Simply copy a sentence from one of your user comments, paste it in a Google search in double quotes, and add the site:[example.com] operator to limit the results to your own domain.

An example:

This query is looking for a specific sentence from a comment on this article, and indeed Google shows it:

You may need to try snippets from comments on several different articles, and should always select articles that are at least a few weeks old. For Google to see and index comments, it usually needs to render pages as part of its tiered indexing process. The rendering phase of content is often delayed – it can take weeks for Google to fully render a newly published webpage.

So pick an old(ish) article that was relatively popular (so has a higher probability of being rendered), copy a snippet from a top comment underneath the article, paste it in a Google search box with quotes, and use the site: operator to focus the search on your own website.

If Google shows the article the comment belongs to, then yes Google indexes your comments.

When we’ve answered the first question, the logical follow-up question is whether Google should be able to index comments. And, again, it depends.

Google has confirmed that it sees comments as part of the webpage’s content. In fact, Google has gone on record to say they like seeing comments. In the words of Google’s John Mueller:

“What I think is really useful there with those comments is that oftentimes people will write about the page in their own words and that gives us a little bit more information on how we can show this page in the search results. So from that point of view I think comments are a good thing on a page.”

I’ve had this experience myself, where one of my blog posts ranked top in Google results for a specific query that appeared only in the comments and not in the actual piece:

When I migrated my site to a new platform I chose not to migrate the comments across, and as a result I lost this particular ranking (which I’m genuinely a bit sad about).

But this also brings up a second point: Not all comments add value.

If you moderate your comments closely, you can ensure the published comments adhere to minimum standards and avoid spam, abuse, and profanity. With good moderation practices, it’s definitely worth allowing Google to index your comments as these can add value and enable broader rankings for your article in Google’s ‘ten blue links’ evergreen rankings.

However, if you have imperfect (or no) moderation, or your comment section has a certain community culture that features language which may be interpreted as abusive and profane, it might be safer to make your comments invisible for Google.

I have seen websites suffer from algorithm updates when their comment sections had specific features that were acceptable within the cultural norms of that site’s community, but to outsiders could appear to be abusive and harmful.

Google prefers to err on the side of caution. When Google sees an abundance of content on a site that could be interpreted as harmful, it can downgrade that website’s visibility in its search results – including in Top Stories if it’s a news publisher.

So you’ll have to take an objective look at the comments underneath your articles, and judge whether they’re acceptable for a broad audience. If the answer is ‘maybe not’, you should consider removing your comments from Google’s sight.

Which brings us to the third question: How can Google be prevented from seeing the comments on your site? The answer is, you guessed it… it depends.

Blocking Google from indexing comments is a technical issue. The best approach depends on how your site has implemented its comments function.

A common approach is to load comments through JavaScript. When specific JS files need to be loaded for your comments to appear on your articles, you could prevent Google from loading those JS files by blocking them with a robots.txt disallow rule. For example:

User-agent: Googlebot

Disallow: /*comments.jsSome websites load comments with a separate URL attached to an article, for example with a URL parameter added to the article URL or in a subfolder (like Substack’s comment function) . These too can be blocked in robots.txt:

User-agent: Googlebot

Disallow: /*?comments=yes

Disallow: /*/commentsAlternatively, if your comments are on a separate URL you can add a noindex meta tag to this URL to prevent Google from indexing them:

<meta name="robots" content="noindex,nofollow">If your comments are implemented on your site with a hashed URL, for example /article-url-here#comments, then blocking becomes more challenging. Google ignores hashes (and everything after a hash symbol) in URLs, so as a first step you’ll need to test whether Google can see the comments.

If Google indexes your comments, you may need to change how comments are implemented on your site to enable a specific blocking mechanism.

Some sites use a third-party comments feature like Disqus. I’m generally not a fan of those: Not only are such external comment features often terribly slow and have a negative impact on your site’s core web vitals, they can also come with additional problematic ‘features’.

The only upside is that, by virtue of trying to hide their footprint from Google, 3rd party comment features frequently make themselves unindexable for search engines. I’m not sure that payoff is worth it, though.

After the email edition of this newsletter went out, Will reached out and reminded me that comments are explicitly mentioned as page quality signals in Google’s Quality Raters Guidelines.

In these guidelines, Google states: “If a specific page on a website has unrelated ‘spammed’ comments, the page should be ratest Lowest.”

So even if Google can’t see bad comments but they’re shown to users, it can still impact your site’s quality signals as perceived by the machine learning systems that the quality raters are improving.

If you lack the resources to properly moderate your comments, it might be safer to get rid of comments altogether.

So far I haven’t discussed an additional aspect to consider with comments: How it impacts Googlebot’s crawling of your site. Once again, this depends on exactly how comments have been implemented.

Some websites generate a unique URL for every comment that is posted. This could create enormous amounts of new URLs for Googlebot to discover, crawl, and potentially index. This represents a potential crawl waste issue, and adds an additional element to your SEO considerations.

If you’re finding that Google is spending an inordinate amount of crawl effort on comment URLs, you could consider blocking Googlebot from your comments with aforementioned robots.txt disallow rules or a similar mechanism.

If you have any thoughts of your own on the value of comments and how you would approach it, please leave a comment. 😉

The third annual News and Editorial SEO Summit was held last month on October 11th and 12th, and I’m proud to say it was another smashing success. We had 546 registered attendees, of which 490 joined the live event at various stages.

The event had a truly global audience, with attendees from 53 different countries; from New Zealand to the USA, from Norway to Argentina! Every continent was represented at NESS 2023 (except for Antarctica – perhaps one day!). It’s amazing to have such a diverse audience from around the world be part of the event.

The chat was very lively on both days – there were over 1600 messages posted in the chat in total, and a whopping 578 questions asked with the Q&A feature across all sessions! Amazing engagement from all our attendees.

The ever-awesome Jessie & Shelby have written roundups of both days of the conference, which you can read on their excellent WTF is SEO? website:

We’re already planning the 2024 edition and have dates reserved: October 29th & 30th 2024. Save the dates in your calendar and keep an eye on the NewsSEO.io website for details.

As usual I’ll end with a roundup of the most interesting updates, resources, and stories since the last newsletter.

Official Google Docs:

Interesting Articles:

Latest in SEO:

That’s it for this edition. Thanks for reading and subscribing, and I’ll see you at the next one!