Late last year, as part of Google’s Vice President of Search Pandu Nayak’s testimony in an antitrust trial, some very interesting internal documents were revealed to the public. These documents confirmed certain aspects of Google’s inner workings that the SEO community had long suspected, but were never officially confirmed.

Several parts of these internal documents stood out to me, because for me they explain the chaos we sometimes see in Google’s search results. There’s a rising tide of complaints that Google’s results are degrading, with obvious spam and low quality content becoming more prominent.

We feel that this is something Google should be able to fix, as it’s immediately clear to us that these spam webpages are full of awful content. If we can see it’s bad, why can’t Google?

The reason is that Google just isn’t that good at understanding content.

Google struggles to read and evaluate content in the way humans do. Yes, Google can identify and extract keywords (topics & entities) and get a gauge of what the content is about, but actually understand content in a semantically meaningful way – and make evaluations about the content’s quality and usefulness – remains a tough challenge.

But what Google is good at is understanding how people interact with content. Specifically, when users click on a search result and whether they then return to the search result and click on a different page.

This ‘dialogue’ of search results and click behaviour is what allows Google to finetune and improve their results.

That’s Google’s ‘magic’. The scale of Google search means they have billions and billions of clicks that they can analyse and extract learnings from to help improve their results and show content that users have a positive engagement with.

(Side note: Chrome probably helps with this, as it collects its users’ click data.)

The SEO community has suspected Google uses click data in their ranking systems for many years. This piece from Rand Fishkin back in 2014 showed how a click experiment managed to directly influence a webpage’s rankings in Google.

While Google never fully denied using clicks, on many occasions Googlers have tried to cast doubt on clicks as a viable data source using woolly language and roundabout half-denials. It was clear that Google didn’t want us to know for sure that they use click data.

Looking back, those efforts from Google to undermine our assumptions were basically an attempt to gaslight the SEO industry into believing clicks didn’t matter. They probably felt that if SEOs understood exactly how important clicks were, we’d try to manipulate the signal and ruin Google’s results.

Which, I have to admit, is not an entirely unreasonable stance to take. After all, SEOs managed to manipulate Google’s other primary ranking signal: Links.

In the first version of Google, launched to the public back in 1998, the primary ranking metric used was PageRank. This is a calculation of a webpage’s quality, derived from the number of hyperlinks pointing to that page from other webpages.

Using links as a ranking factor is what made Google the best search engine at the time, and to this day it is still a core component of their ranking systems.

Link metrics have evolved over time. Google doesn’t call it PageRank anymore, but I feel it’s still an appropriate word to use to describe link value and how it flows between webpages.

A few years ago I wrote an in-depth piece on PageRank and how it works: Introduction to PageRank for SEO. Worth a read if you’re interested in the topic.

Despite Google proclaiming for years – decades – that all you need to do to rank well is produce great content, it turns out that content wasn’t the king of SEO after all.

At least, not directly.

Google is about links and clicks. Links provide the initial signal that a webpage might be worth ranking in search results, and clicks then confirm (or deny) that ranking as it shows how people interact with that webpage.

What does this mean for publishers? Do we need to drastically rethink our approach to SEO? Is an entirely different strategy required?

Well, no. In fact, it’s pretty much business as usual.

Let’s think about this for a moment. To succeed in Google, we now know beyond reasonable doubt, you need to get other websites to link to your site so that Google understands your content might be worth ranking. And then you need people to click on your page and not immediately return to Google’s results.

So you need to get links, and you need people to click on your stuff. How do you go about achieving these two things?

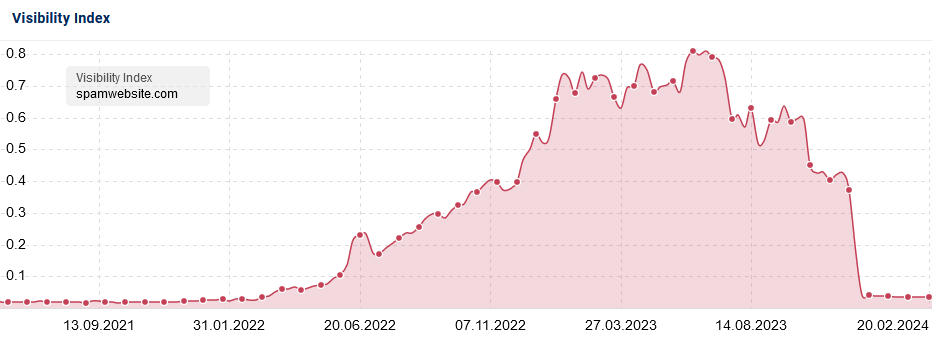

You may think there are ‘cheats’ that you can use to get links and clicks, and you’d be right. You can cheat your way to the top of Google’s results. It’s what spammers have been doing, with some measure of success, for as long as search engines have existed.

And every time, without fail, Google eventually catches on and spam sites lose their rankings. Sometimes Google finds out quickly, and sometimes it takes a while, but inevitably the game is up.

So, let’s assume you want to build a sustainable business in Google’s results, and not try to cheat your way to a temporary peak with an inevitable rapid demise. How do you accomplish lasting success in Google?

Of course, it is about content after all.

The best way to get other websites to link to you is to create content that deserves being linked to. Content that delivers something of value – an exclusive news story, an insightful piece of analysis, a compelling opinion voice, etc.

And this is something you need to do again and again. Create as many pieces of deserving content as you can, so that you accumulate links from various sources over a sustained period of time.

And then, as the link signals accumulate and Google starts ranking your pages in their results, you need to get people to click on your site and stay there. This, too, is founded on the quality of your content.

Make your content engaging and easy to read. Recommend other, related stories to your audience that are also engaging and easy to read. Provide a good user experience so people enjoy reading content on your site. Ensure visitors to your site can easily find what they’re looking for.

All these things have always been crucial to SEO: Well-structured content, internal linking, fast-loading webpages, strong UX, good site navigation, and so on.

With all this talk about links and clicks, it’s easy to lose sight of the very foundation of a search engine: Someone typing a search query.

Matching webpages to a user’s query is what a search engine does. And this all begins with keywords. Google tries to understand what the user is looking for by analysing the query itself.

Google has made strong progress over the decades in understanding user intent through Natural Language Processing, and understanding how words refer to concepts and entities and their relationships through the Knowledge Graph.

In a previous newsletter I showed how topic pages and internal links can empower your site’s presence in Google’s Knowledge Graph and drive improved rankings. Again, Google understands links very well, which is why good internal linking is such a powerful tactic.

But it all starts with the entities in the Knowledge Graph. Because Google isn’t as smart as humans in understanding content, we need to be explicit with our content.

That means you should use straightforward keywords to describe entities in your content – i.e. you need to use the right words in the right places.

In the Top Stories boxes on Googles results, articles are shown that match the topic of a user’s query. This is essentially keyword matching: Google looks to see if an article’s headline contains one or more keywords from the user’s query.

So saying that Google is all about links and clicks is not entirely correct – it’s also about keywords.

Keywords, links, and clicks. Those are the three main ingredients of Google’s magic formula.

What the documents revealed in Google’s antitrust trial have shown is that SEO as we’ve always done it – optimising content for keywords, creating linkworthy content, and providing a great user experience – is exactly how it should be done.

Search and SEO have evolved of course, with algorithms’ emphases changing and different tactics falling in and out of fashion. Yet the core of SEO is still the same. That’s not something I expect to change anytime soon.

If you want proper deep dives into the Google documents revealed in the antitrust trial, I highly recommend these three articles:

And here’s a selection of recent official updates, interesting articles, and SEO insights that are worth your while.

Official Google Docs:

Interesting Articles:

Latest in SEO:

Lastly, if you’ll allow me some blatant self-promotion, I’m doing a webinar with NewzDash’s John Shehata about advanced technical SEO for news publishers on March 11th. Details and registration here.

That’s it for another (very belated) edition. Thanks for reading and subscribing, and I’ll see you at the next one!